How to Create Effective Multiple Choice Questions

There are many advantages to using multiple choice (MC) questions as an evaluation / assessment strategy. They are easy to set up, easy to mark, and allow teachers to cover a wide range of topics. There are usually past assessments that can be used as reference to create new questions for tests. Multiple choice questions also enable students to practice a variety of skills, including problem-solving, fact recall, and creative thinking. Due to the valuable nature of multiple-choice assessments, it is important to develop reliable questions that can accurately measure student’s understanding.

A few of the advantages of multiple choice questions are listed below. For this list, we will refer to multiple choice test questions as items.

- Items are effective in testing various levels of understanding as answers can vary from simply fact-checking to a more in-depth analysis. There are some limits in testing knowledge and understanding using a multiple choice format as the answers are predefined. As such, multiple choice tests are not ideal for synthesizing information or explaining ideas.

- Items are more effective in providing a reliable summary of learning. There is no marking bias from the instructor as the answers are either correct or incorrect. Students also make less guesses on multiple choice than on true or false questions. To further increase the reliability, items on a test should all support a single learning goal.

- Multiple choice items are also more efficient than other types of tests. Since it takes less time to complete a multiple choice question, more material can be covered in a single evaluation. This leads to a broader overview of student understanding, thus increasing the validity of the test.

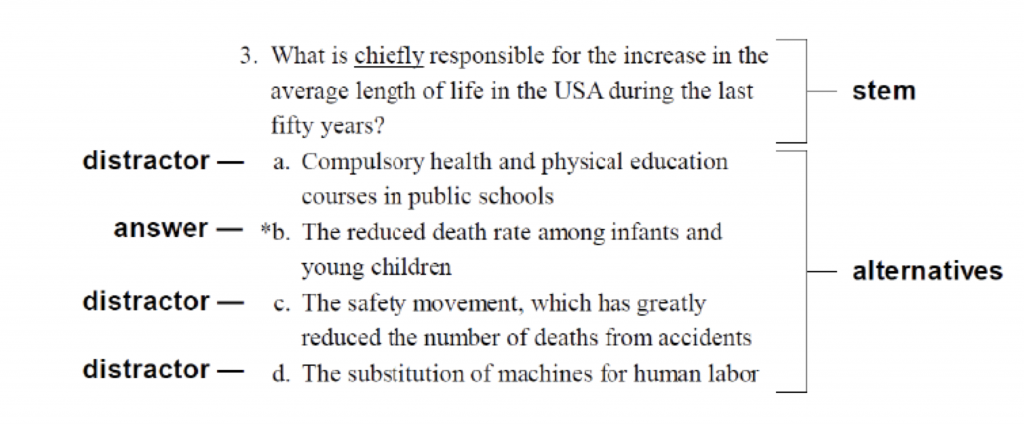

Validity and reliability are two key factors directing the use of multiple choice tests. A broader overview of material increases the validity of the test, and the questions themselves offer the opportunity to increase the reliability. They are usually composed of two distinct parts: a question stem and a minimum of 3 choices for answers. Choices include distractor options and then one correct answer. They can be summarized as follows:

- A stem question is phrased without negative connotations, including the words ‘not’ or ‘except’ to minimize confusion. For example, “The Court’s decision did not…” promotes uncertainty in the question stem. A better use of phrasing would be “The Court enabled land owners to do as they pleased.” Sentences should be clear and concise.

- Distractors should also be succinct and in random order so as to not provide clues. Vague responses such as “all of the above” or “none of the above” should be avoided. Ideally, distractors would target misconceptions related to the material but not provide hints that they are the incorrect.

According to Rodriquez (2005), “…MC items should consist of three options, one correct option and two plausible distractors. Using more options does little to improve item and test score statistics and typically results in implausible distractors”.

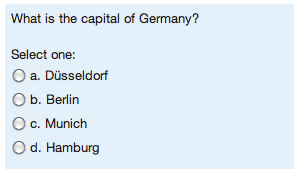

An example:

Another example:

Constructing a Quality Stem

- Describe a definite problem. Just as clear learning objectives must be outlined, so must assessment questions. Vague questions may offer the opportunity for students to demonstrate inferred knowledge, but do not directly evaluate whether they have achieved the learning goal.

- Extra words and irrelevant information should not be included in the question stem as it can confuse readers and potentially impact responses.

- Avoid negative phrasing, which also has the ability to impact test scores due to question confusing. Sentences in a negative sense should only be used in certain situations, such as lab safety practices, and will be clearly identified using a contrasting font effect.

- Create full sentences, preferable in question form. Partial sentences increase the cognitive load of the question, as students must continually reflect on the stem as they consider each possible answer. Clear, full question stems focus on the knowledge gained on the topic.

Creating effective distractors, or alternative answers:

- The purpose of the alternative answers is to distract the student responding to the multiple choice question. While students who have achieved the learning goal will find it easy to ignore the distractors, those who have not may select the incorrect answer. Distractors need to be believable answers, and the most effective source of alternatives is the common errors related to the question.

- Alternative answers should be clear and not lengthy. The goal is to assess a student’s knowledge, not their reading ability.

- Distractors need to be reasonable responses and not wildly different. Students will be alerted to possible incorrect answers due to odd formatting, length, word choice, or grammatical styling.

- There are limitations to the use of “all of the above” and “none of the above” as it allows students to select a correct answer even if there is only partial knowledge. If a student identifies more than one possible answer as correct, they can select “all of the above” even if they are hesitant about the other responses. Similarly, if a student can eliminate one or two possible answers, they may select “none of the above.” This offers them a chance at the correct response even if they had limited understanding.

- Alternatives need to be arranged in a particular way to ensure that there is no partiality towards answers. For example, they could be placed in numerical or alphabetical order.

- All alternative answers must be plausible. Students who have achieved the learning objective are successful in ignoring reasonable distractors, while students with only partial knowledge may hesitate and choose it as a response. Tests that contain obviously implausible answers are up to four times as unreliable as those who do not.

Recommendations

- There should be consistency between the learning goals and the multiple choice questions on a test. Classroom activities should be carefully selected after the learning objective in order to specifically help students build knowledge on that topic. This is called backwards design, or backwards planning, where the instructor creates the learning activities with the end goal in mind. Each assessment, which may include multiple choice questions, analyzes students understanding and problem-solving strategies acquired through the learning process. Alignment is also important.

- Emphasize higher-level thinking. Instructors are encouraged to create multiple choice questions based on the cognitive levels outlined in Bloom’s Taxonomy. This learning framework, which was revised in 2001, outlines the range of cognitive ability in individuals. From lowest-level to highest-level thinking, the categories are: Remembering, Understanding, Applying, Analyzing, Evaluating, and Creating. Bloom’s model may help instructors develop appropriately leveled questions.

- The focus should be on (1) constructing multiple choice items that assess higher level cognition based on Bloom’s Taxonomy and (2) connecting the items to learning outcomes.

References:

- Rodriguez, M.C. (2005). Three options are optimal for multiple-choice items: A meta-analysis of 80 years of research. Educational Measurement: Issues and Practice, 24(2), 3.